The 26th annual Black Hat USA 2023 conference and the 31st annual DEF CON conference took place at the beginning of August in Las Vegas, bringing together some of the biggest companies and individuals in cybersecurity and hacking.

One major takeaway from the two conferences has been that AI systems and platforms are increasingly vulnerable to cybersecurity attacks and scams. As a result, major corporations are seeking help from hackers, AI experts, computer scientists, and more.

DEF CON’s Generative Red Team Challenge

The DEF CON hacking conference hosted the highly anticipated Generative Red Team Challenge in which 2,200 competitors attempted to break through the guardrails and expose vulnerabilities in eight different large-language models (LLMs). In each competitor’s 50-minute session, they received a list of challenges to try on a randomly assigned LLM on an evaluation platform developed by Scale AI. The challenge categories involved prompt hacking, security, information integrity, internal consistency, and societal harms.

The participants competed for points, with the individual with the most points taking home the grand prize of an NVIDIA GPU. Any bugs that were discovered in the challenge will be disclosed to the public in February using industry-standard responsible disclosure practices. The hope is that any security holes or problems can be fixed before they get exploited.

As AI Village founder Sven Cattell said in a statement, “Traditionally, companies have solved this problem with specialized red teams. However, this work has largely happened in private. The diverse issues with these models will not be resolved until more people know how to red team and assess them.”

Advertisement

Participating in the challenge were major generative AI developers such as Anthropic, Google, Microsoft, OpenAI, and NVIDIA. The White House Office of Science, Technology, and Policy also expressed their support for the exercise, coinciding with a set of recently announced White House initiatives that are aimed at improving the safety and security of AI models.

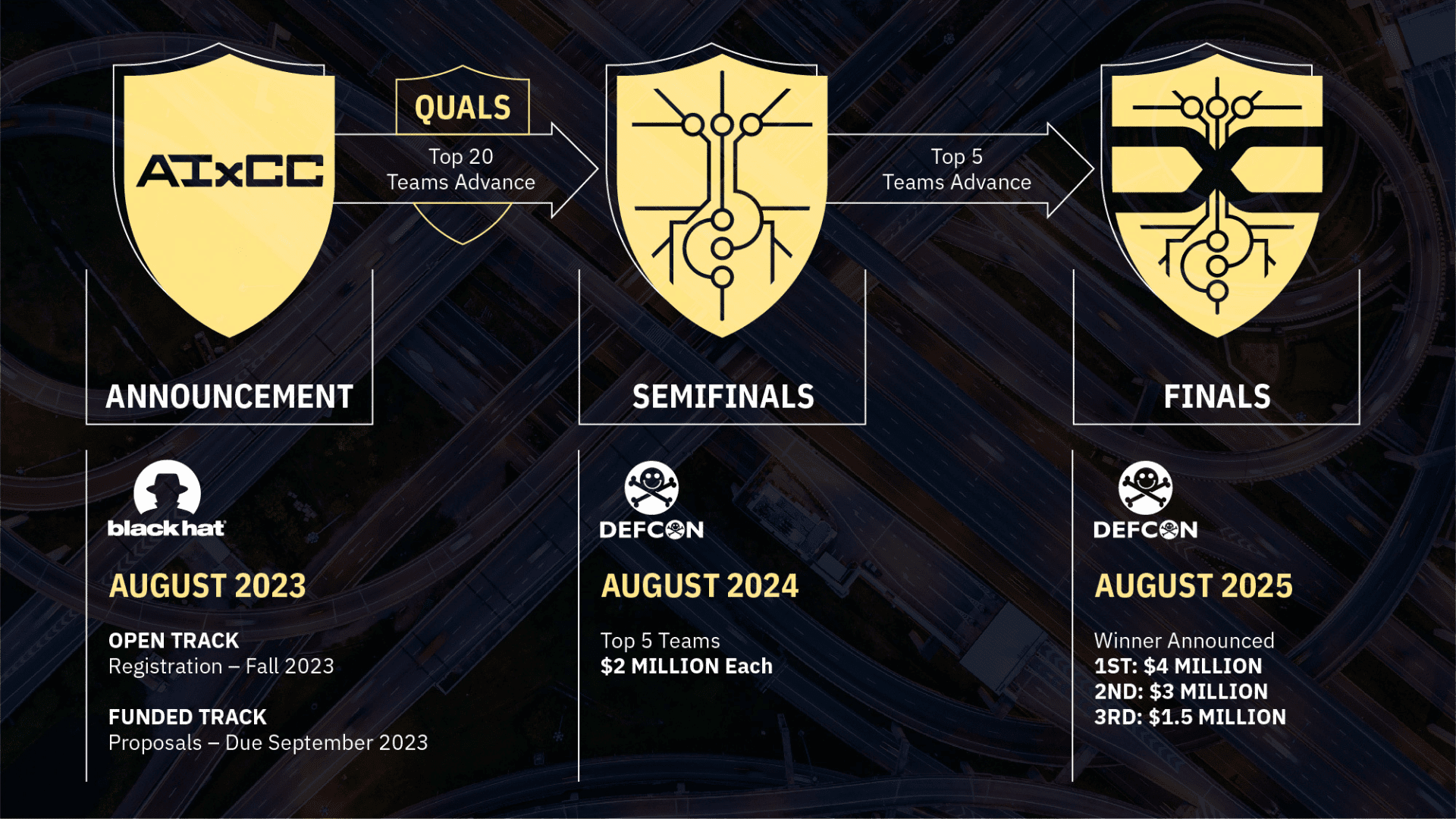

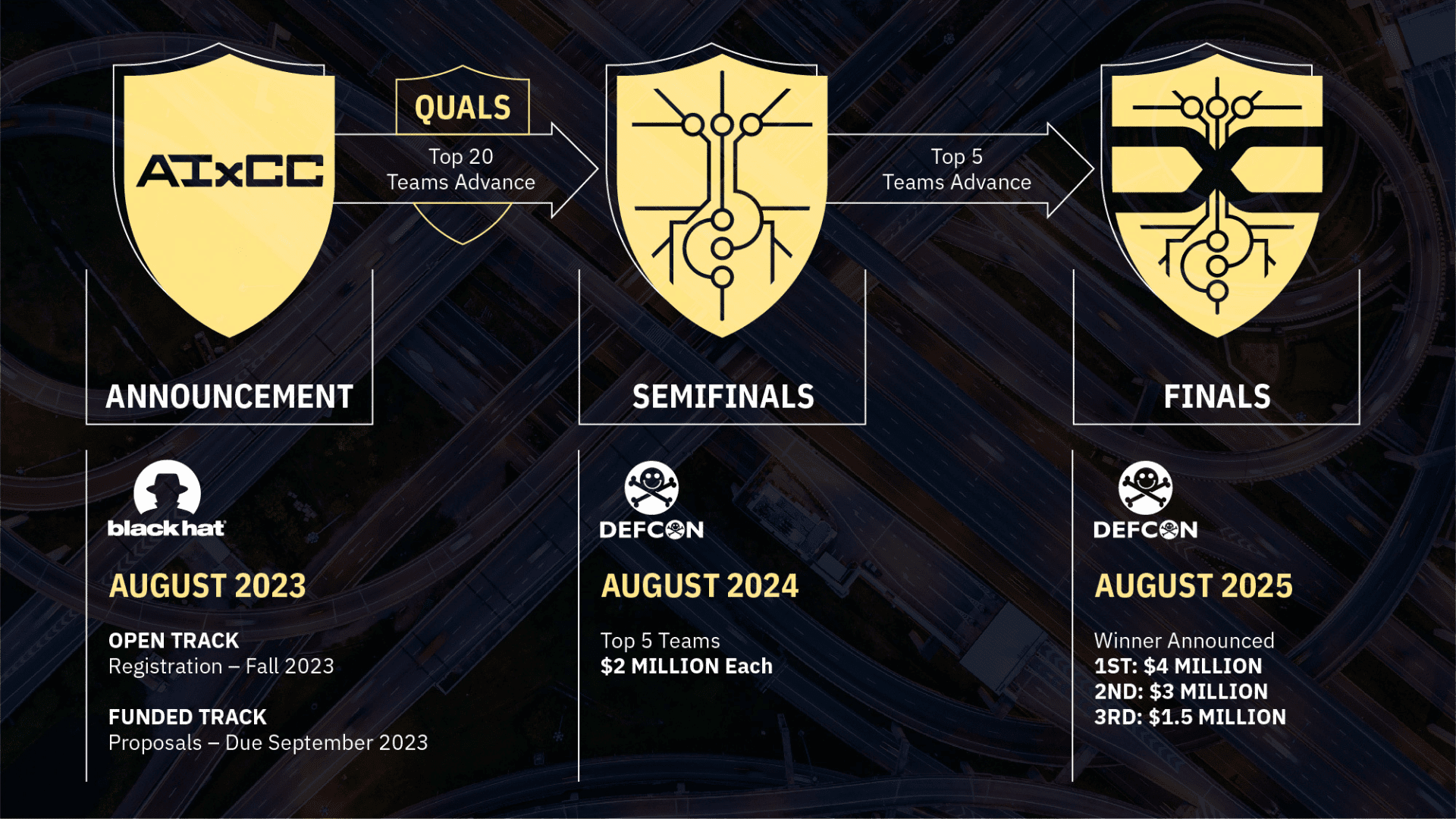

Black Hat 2023’s AI Cyber Challenge

During the same weekend in Vegas, the Black Hat 2023 Conference also took place. During the initial keynote, the Defense Advanced Research Projects Agency (DARPA) announced the AI Cyber Challenge (AIxCC), a two-year AI cybersecurity competition. Unlike the Generative Red Team Challenge, AIxCC does not involve hacking into current LLMs and systems. Instead, the competition calls on computer scientists, AI experts, software developers, and cybersecurity specialists to develop AI-driven cybersecurity tools to secure the country’s critical infrastructure.

Leading AI companies such as Google, Microsoft, and OpenAI will work with DARPA to make their cutting-edge technology and expertise available to challenge competitors and enable them to develop state-of-the-art cybersecurity systems. As Perri Adams, DARPA’s AIxCC program manager, added, “If successful, AIxCC will not only produce the next generation of cybersecurity tools but will show how AI can be used to better society by defending its critical underpinnings.”

AIxCC will allow two tracks for participation: the Funded Track and the Open Track. Up to seven small businesses that submit proposals to a Small Business Innovation Research solicitation will receive $1 million each as a part of the Funded Track. Open Track competitors will register with DARPA via the competition website and will proceed without DARPA funding.

The semifinals for the competition will be held at Black Hat 2024, with the finals taking place at Black Hat 2025. All five teams in the semifinals will receive a prize of $2 million, while those finishing first, second, and third will take home an additional $4 million, $3 million, and $1.5 million, respectively.