Carnegie Mellon University’s new artist-in-residence is less of a Rembrandt and more of an R2-D2. After ChatGPT shocked the world with its abilities, researchers at Carnegie Mellon University created an AI-powered robot that, according to a press release, can create beautiful art on physical canvas with the help of simple text prompts.

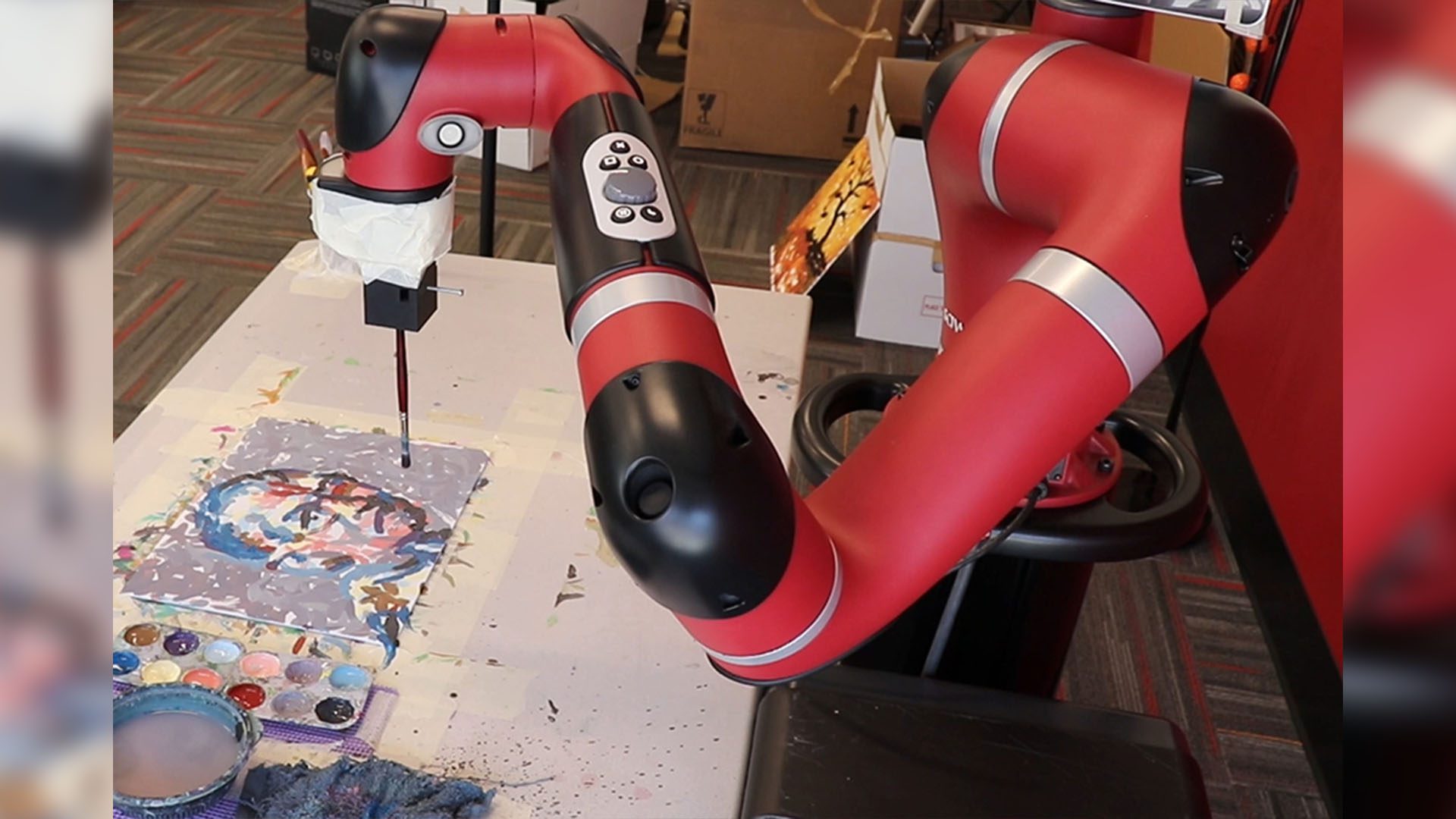

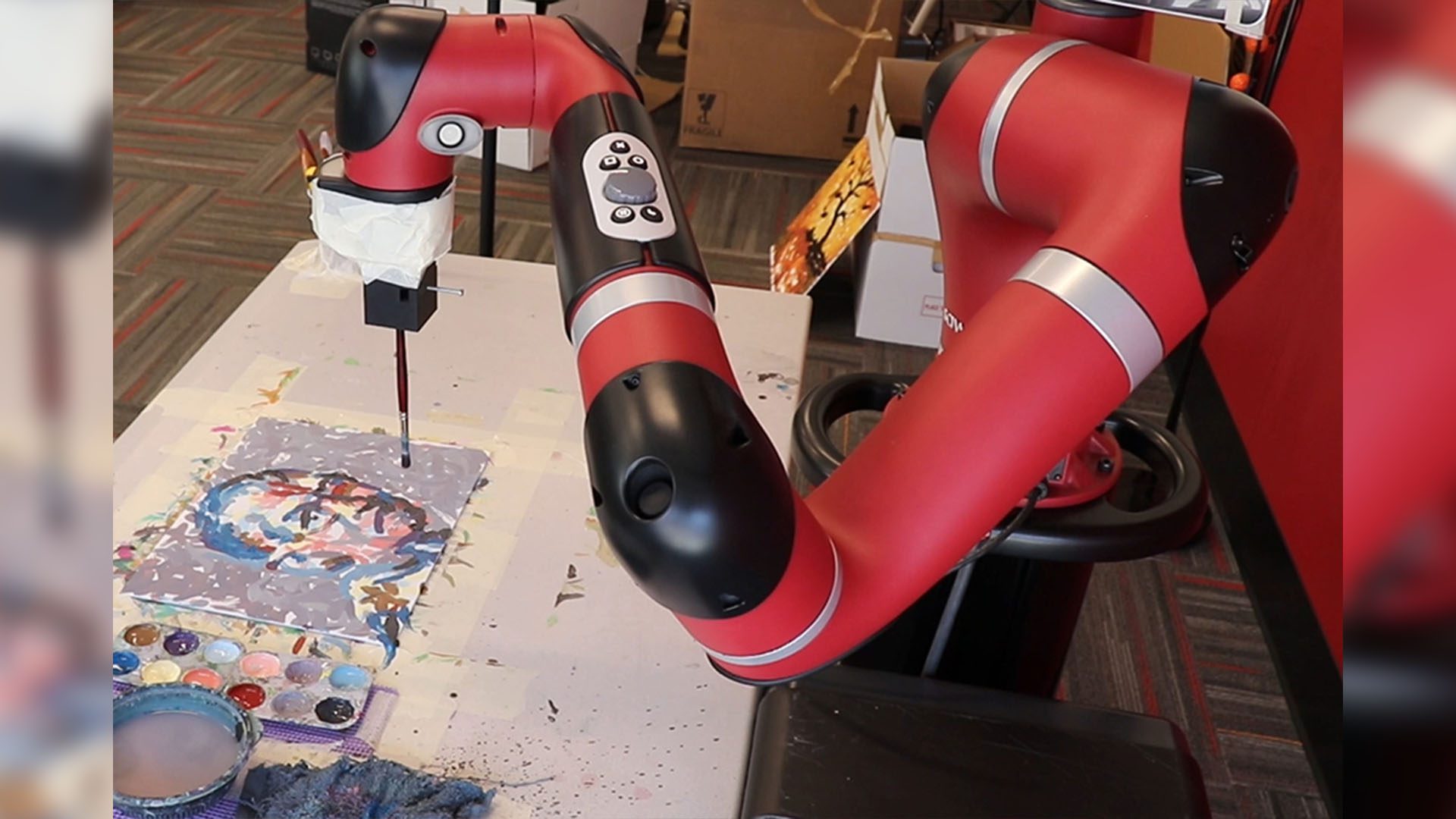

The FRIDA robot (Framework and Robotics Initiative for Developing Arts) is an AI-powered robotic arm with a paintbrush taped to it that is capable of creating unique paintings using photographs, works of art, human input, and even music.

The robot uses AI models similar to those powering tools such as OpenAI’s ChatGPT and DALL-E 2, which generate text or an image in response to a prompt. FRIDA takes this a step further by using its AI robotics system to produce physical paintings. The project is led by Schaldenbrand with RI faculty members Jean Oh and Jim McCann and has inspired students and researchers across CMU.

Although FRIDA, named after Mexican painter Frida Khalo, requires basic input including text descriptions and existing images, the AI software is capable of working more abstractly. For example, in one instance the research team played ABBA’s “Dancing Queen” and asked FRIDA to paint it.

One of the most interesting insights about FRIDA is its allotted imprecision. Most AI systems are designed to be as accurate as possible, but FRIDA is allowed to make mistakes and adjust the remainder of the painting accordingly using an overhead camera to monitor its own progress. Speed also isn’t prioritized and each painting takes hours to complete.

Some of FRIDAs paintings #Robotics #creativeAI pic.twitter.com/5AX7lA8oxW

— FRIDA Robot Painter (@FridaRobot) December 5, 2022

Advertisement

FRIDA spends an hour learning how to use its paintbrush, then uses large vision-language models trained on massive data sets that pair text and images scraped from the internet (such as OpenAI’s Contrastive Language-Image Pre-Training) to understand the input. Other image-generating tools such as OpenAI’s DALL-E 2 use large vision-language models to produce digital images.

More sophisticated than similar bots, FRIDA analyzes its brushwork in real-time and makes adjustments accordingly. One of the most difficult technical challenges is reducing the simulation-to-real gap or the difference between what FRIDA composes digitally and what it paints on the physical canvas. FRIDA uses an idea known as real2sim2real, the robot’s actual brush strokes are used to train the simulator to reflect the physical capabilities of the robot and painting materials.

Researchers involved in FRIDA’s development stated its art is “whimsical and impressionistic”. Ph.D. student at Carnegie Mellon University Peter Schaldenbrand said, “FRIDA is a robotic painting system, but FRIDA is not an artist… FRIDA is not generating the ideas to communicate. FRIDA is a system that an artist could collaborate with. The artist can specify high-level goals for FRIDA, and then FRIDA can execute them.”

As opposed to replacing artists with AI, however, the creators behind this FRIDA see the project as a way for artists and AI to collaborate. A faculty member of the University, Jean Oh, said, “We want to really promote human creativity through FRIDA. For instance, I personally wanted to be an artist. Now, I can actually collaborate with FRIDA to express my ideas in painting.”

FRIDA even displays a color pallet on its computer screen for a human to mix and provide for the robot. Automatic paint mixing is currently in development, led by Jiaying Wei, a master’s student in the School of Architecture, with Eunsu Kang, faculty in the Machine Learning Department. As per the team’s research paper, “FRIDA is a robotics initiative to promote human creativity, rather than replacing it, by providing intuitive ways for humans to express their ideas using natural language or sample images.”

In the future, researchers hope to perfect FRIDA”s skills and expand his capabilities to also include sculpting. “FRIDA is a project exploring the intersection of human and robotic creativity,” stated Jim McCann, another faculty member. McCann added, “FRIDA is using the kind of AI models that have been developed to do things like caption images and understand scene content and applying it to this artistic generative problem.”